AG-UI: The Protocol Your AI Agent Frontend Has Been Missing

Every agent framework invents its own way to talk to frontends. LangChain has callbacks. CrewAI has its own streaming format. OpenAI's Assistants API has a proprietary run/step model. Anthropic's Messages API has a different streaming shape. If you swap your backend, you rewrite your frontend.

This is the state of agent-to-UI communication in 2026. There is no shared protocol. No common event vocabulary. No equivalent of HTTP for the agent layer.

AG-UI is an attempt to fix that. It defines a standard set of events that any agent backend can emit and any frontend can consume, streamed over SSE. We adopted it for our production AI prototyping tool and it has become the most underappreciated piece of our architecture.

AG-UI in 60 Seconds

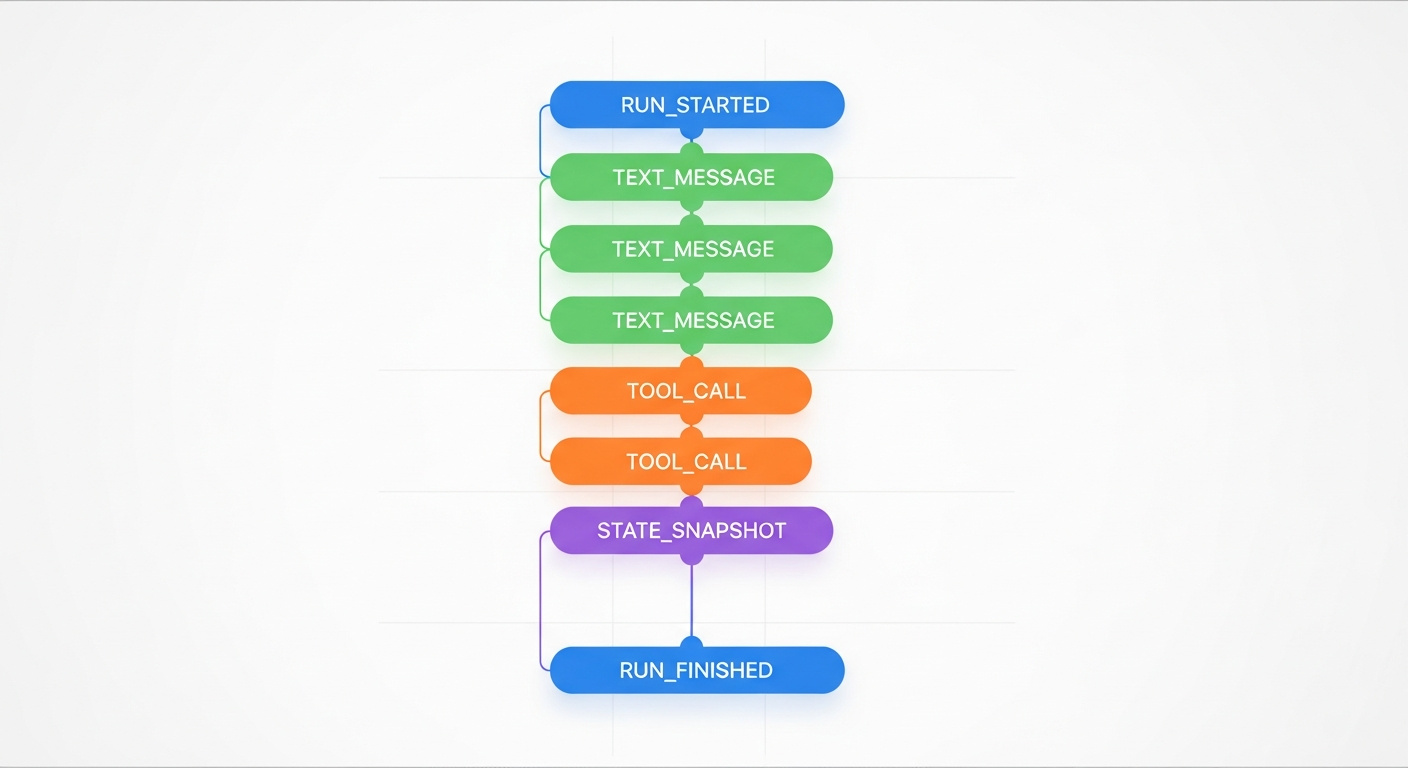

The protocol defines five categories of events. All of them follow the same base shape: a type field, an optional timestamp, and event-specific fields.

Lifecycle Events control the boundaries of an agent run:

RUN_STARTED/RUN_FINISHED/RUN_ERROR- Optional

STEP_STARTED/STEP_FINISHEDfor granular progress

Text Message Events stream text content:

TEXT_MESSAGE_START(opens a message with amessageId)TEXT_MESSAGE_CONTENT(delivers text deltas)TEXT_MESSAGE_END(finalizes the message)

Tool Call Events expose tool execution:

TOOL_CALL_START(tool name + ID)TOOL_CALL_ARGS(streaming JSON argument fragments)TOOL_CALL_END(result)

State Management Events synchronize state:

STATE_SNAPSHOT(full state replacement)STATE_DELTA(JSON Patch incremental updates)MESSAGES_SNAPSHOT(full conversation history)

Special Events handle extensibility:

CUSTOM(application-defined events with a name + payload)RAW(passthrough from external systems)

That is the entire protocol. Twelve core event types that cover the full agent interaction lifecycle.

The full lifecycle of an AG-UI run: twelve event types covering text streaming, tool execution, state sync, and run boundaries.

The full lifecycle of an AG-UI run: twelve event types covering text streaming, tool execution, state sync, and run boundaries.

Why SSE, Not WebSockets

AG-UI uses Server-Sent Events as its transport layer. Three reasons:

- POST support. Unlike the browser's

EventSourceAPI (GET only), AG-UI clients usefetchwith aReadableStream. This lets you send a full JSON request body with the conversation history, state, and configuration. - No connection upgrade. SSE runs over plain HTTP. No handshake, no state management, no ping/pong frames. The server writes events, the client reads them.

- One direction is enough. Agent runs are request/response. The client sends a message, the server streams events back. If the client needs to send another message, it makes a new request. Bidirectional communication adds complexity with no benefit here.

The request body is a RunAgentInput with seven fields:

{

"runId": "uuid-v4",

"threadId": "persistent-conversation-id",

"messages": [{ "id": "msg-1", "role": "user", "content": "Build me an app" }],

"state": { "steps": [], "files_created": [], "preview_url": null },

"tools": [],

"context": [],

"forwardedProps": {}

}

The response is a stream of SSE events:

data: {"type": "RUN_STARTED", "threadId": "abc", "runId": "xyz"}

data: {"type": "TEXT_MESSAGE_START", "messageId": "msg-2", "role": "assistant"}

data: {"type": "TEXT_MESSAGE_CONTENT", "messageId": "msg-2", "delta": "I'll build"}

data: {"type": "TEXT_MESSAGE_CONTENT", "messageId": "msg-2", "delta": " a weather app."}

data: {"type": "TOOL_CALL_START", "toolCallId": "tc-1", "toolCallName": "write_file"}

data: {"type": "TOOL_CALL_END", "toolCallId": "tc-1"}

data: {"type": "STATE_SNAPSHOT", "snapshot": {"files_created": ["index.html"]}}

data: {"type": "RUN_FINISHED", "threadId": "abc", "runId": "xyz"}

Shared State is the Killer Feature

Most people hear "streaming protocol" and think text deltas. The part that actually matters for production agent UIs is state synchronization.

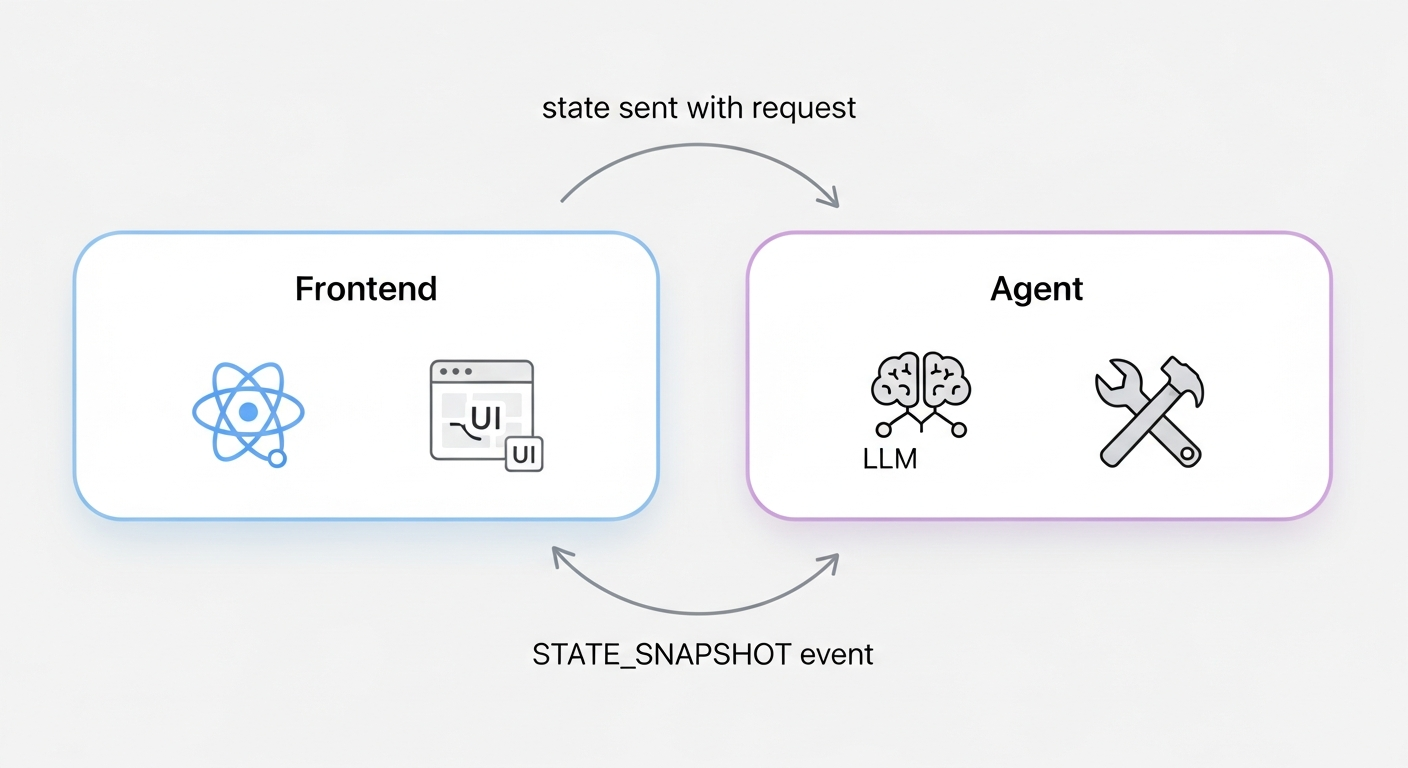

AG-UI's state model is bidirectional:

Bidirectional state sync: the frontend sends accumulated state with each request, the agent pushes updates back via STATE_SNAPSHOT events.

Bidirectional state sync: the frontend sends accumulated state with each request, the agent pushes updates back via STATE_SNAPSHOT events.

- Frontend sends state with each request. The

statefield inRunAgentInputcarries the frontend's current state to the agent. - Agent reads it to stay aware. A

state_context_builderfunction can inject state into the LLM prompt. For example, prepending "Current files: index.html, app.js" so the model knows what exists. - Agent pushes state back via events. When a tool modifies state (creates a file, starts a server, plans steps), a

STATE_SNAPSHOTevent carries the update to the frontend.

This loop means the agent never loses context about what happened in previous turns. The LLM sees the accumulated state in every prompt. The frontend sees every state change in real time. Neither side needs to maintain a separate copy of the truth.

For incremental updates, STATE_DELTA uses RFC 6902 JSON Patch operations:

{ "type": "STATE_DELTA", "delta": [

{ "op": "add", "path": "/files_created/-", "value": "styles.css" }

]}

Snapshots for bulk updates. Deltas for fine-grained changes. The frontend applies both the same way.

A Real Stack

Here is what a production AG-UI stack looks like using Python and React:

Backend: Strands Agents SDK + ag_ui_strands

from strands import Agent

from ag_ui_strands import StrandsAgent, StrandsAgentConfig, ToolBehavior

agent = Agent(model=bedrock_model, tools=[...], system_prompt="...")

strands_agent = StrandsAgent(

agent,

config=StrandsAgentConfig(

state_context_builder=inject_project_state,

tool_behaviors={

"write_file": ToolBehavior(state_from_args=track_created_files),

},

),

)

Frontend: Custom React hook + fetch

const response = await fetch("/v3/agent", {

method: "POST",

body: JSON.stringify(runAgentInput),

});

const reader = response.body.getReader();

// Parse SSE lines, switch on event.type, update React state

The backend does not know or care what frontend framework consumes the events. The frontend does not know or care what agent framework produces them. The protocol is the contract.

Where AG-UI Is Headed

The protocol has several event types in draft status that hint at the roadmap:

- Activity Events (

ACTIVITY_SNAPSHOT,ACTIVITY_DELTA) for structured progress updates between chat messages - Reasoning Events (

REASONING_START,REASONING_MESSAGE_CONTENT,REASONING_END) for chain-of-thought visibility with privacy controls - Meta Events (

META_EVENT) for side-band annotations like user feedback or external signals - Extended Lifecycle Events with interrupt/resume support for human-in-the-loop workflows

The core event set is stable. The extensions are where multi-agent orchestration, reasoning transparency, and collaborative workflows are being designed.

If you are building an AI agent with a custom frontend, AG-UI is worth adopting now. The protocol is small enough to implement in a weekend and structured enough to scale to production. And when you inevitably swap your agent backend six months from now, your frontend will not need to change.

References