We Tried CopilotKit. Then We Built Our Own AG-UI Chat Layer.

We were building an AI prototyping tool. The kind where you chat with an agent and it writes code, spins up a dev server, and renders your app in a phone simulator. In real time. Streaming text, inline tool call cards, state synchronization, generative UI components. The whole deal.

CopilotKit was a reasonable starting point. It had AG-UI protocol support and React hooks. So we integrated it, shipped a prototype, and started running into friction with the framework.

What We Ran Into

We evaluated CopilotKit v1.50 and v1.51. Here is what we encountered:

- Agent registration issues. Our agent would silently fail to register in certain mount orders. Debugging meant reading through layers of abstraction with no clear error surface.

- Framework lock-in. CopilotKit owns the SSE connection lifecycle. We could not control reconnection behavior, abort signals, or buffer parsing.

- Opaque abstractions. Raw AG-UI events were hidden behind hooks. We needed access to

TOOL_CALL_ARGSdeltas to render inline tool cards as they stream. Not possible without patching the library. - State sync was all-or-nothing. We needed per-tool state snapshots (files accumulate, steps replace, preview URL overwrites). CopilotKit's shared state model did not support this granularity.

After two weeks of workarounds, we made the call: rip it out and go direct.

The Alternative: Raw AG-UI + fetch

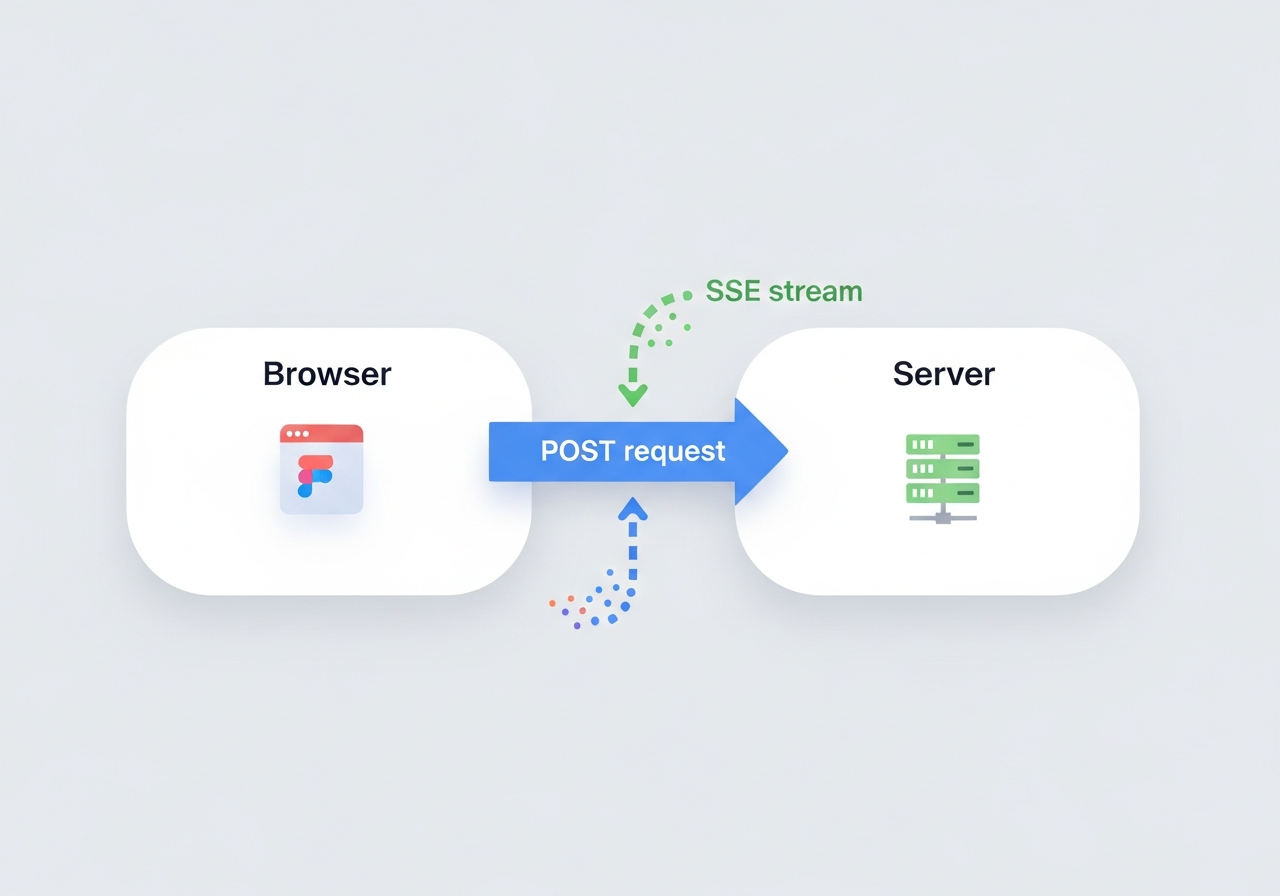

Our streaming layer: the browser sends a POST request with the full conversation state, and the server streams back AG-UI events over SSE -- text deltas, tool calls, and state snapshots.

Our streaming layer: the browser sends a POST request with the full conversation state, and the server streams back AG-UI events over SSE -- text deltas, tool calls, and state snapshots.

The core pattern is surprisingly simple. AG-UI events are JSON payloads streamed over SSE. You do not need a framework to parse them.

function parseSSELines(chunk: string): AGUIEvent[] {

const events: AGUIEvent[] = [];

for (const line of chunk.split("\n")) {

const trimmed = line.trim();

if (trimmed.startsWith("data:")) {

const json = trimmed.slice(5).trim();

if (json && json !== "[DONE]") {

try { events.push(JSON.parse(json)); } catch {}

}

}

}

return events;

}

The connection uses fetch with a ReadableStream, not EventSource. This matters because EventSource only supports GET requests. AG-UI requires POST with a JSON body.

const response = await fetch("/v3/agent", {

method: "POST",

headers: { "Content-Type": "application/json", Accept: "text/event-stream" },

body: JSON.stringify({

runId: crypto.randomUUID(),

threadId: threadIdRef.current,

messages: allMessages,

state: agentState,

tools: [],

context: [],

forwardedProps: {},

}),

});

const reader = response.body.getReader();

Our entire streaming layer lives in a single React hook called useAgentStream. It returns:

const {

messages, // ChatMessage[] with interleaved content blocks

agentState, // { steps, files_created, preview_url, project_name }

isStreaming, // boolean

sendMessage, // (text, images?) => Promise<void>

clearMessages, // () => void (generates new threadId)

} = useAgentStream();

What We Got

Going custom gave us things we could not get from a framework:

- ContentBlocks for interleaved rendering. Each assistant message contains ordered blocks of

{ type: "text" }and{ type: "tool_call" }. Text streams in, a tool card appears inline, more text streams after it. - Per-tool STATE_SNAPSHOT merging. When

write_filefires,files_createdaccumulates via Set union. Whenplan_task_stepsfires,stepsreplaces entirely. Whenstart_dev_serverfinishes,preview_urloverwrites. Each tool has its own merge strategy. - Generative UI via state events. The agent calls

search_images, the backend emits aSTATE_SNAPSHOTwithcustom_ui_components, and the frontend renders an<ImageGrid />inline in the chat. No framework extension points needed. - Multimodal support. Images pasted into chat are converted from AG-UI

BinaryInputContentto the agent's native format in the state context builder. One function, twelve lines. - Silent failure detection. If the SSE stream closes without a

RUN_FINISHEDorRUN_ERRORevent (e.g., expired credentials causing an abrupt disconnect), we surface a helpful error instead of showing nothing.

Total size: ~500 lines of TypeScript. Zero external dependencies beyond React.

Should You Do This?

Honest take:

- If your agent is text-in, text-out with basic tool calls and you do not need fine-grained state control: use CopilotKit. It handles the common case well and saves real time.

- If you need any of the following, consider going custom:

- Inline tool call cards interleaved with streaming text

- Per-tool state merge strategies

- Generative UI (agent-rendered React components)

- Multimodal input (images)

- Custom error handling and reconnection logic

- Full visibility into raw AG-UI events

The AG-UI protocol is well-designed enough that you do not need a framework to use it. A fetch call, a line parser, and a switch statement on event types gets you 90% of the way there.

The remaining 10% is where your app gets interesting.

References