Why Protocols Matter More Than Your Code

What building an AI coding assistant taught me about the most underrated concept in software engineering.

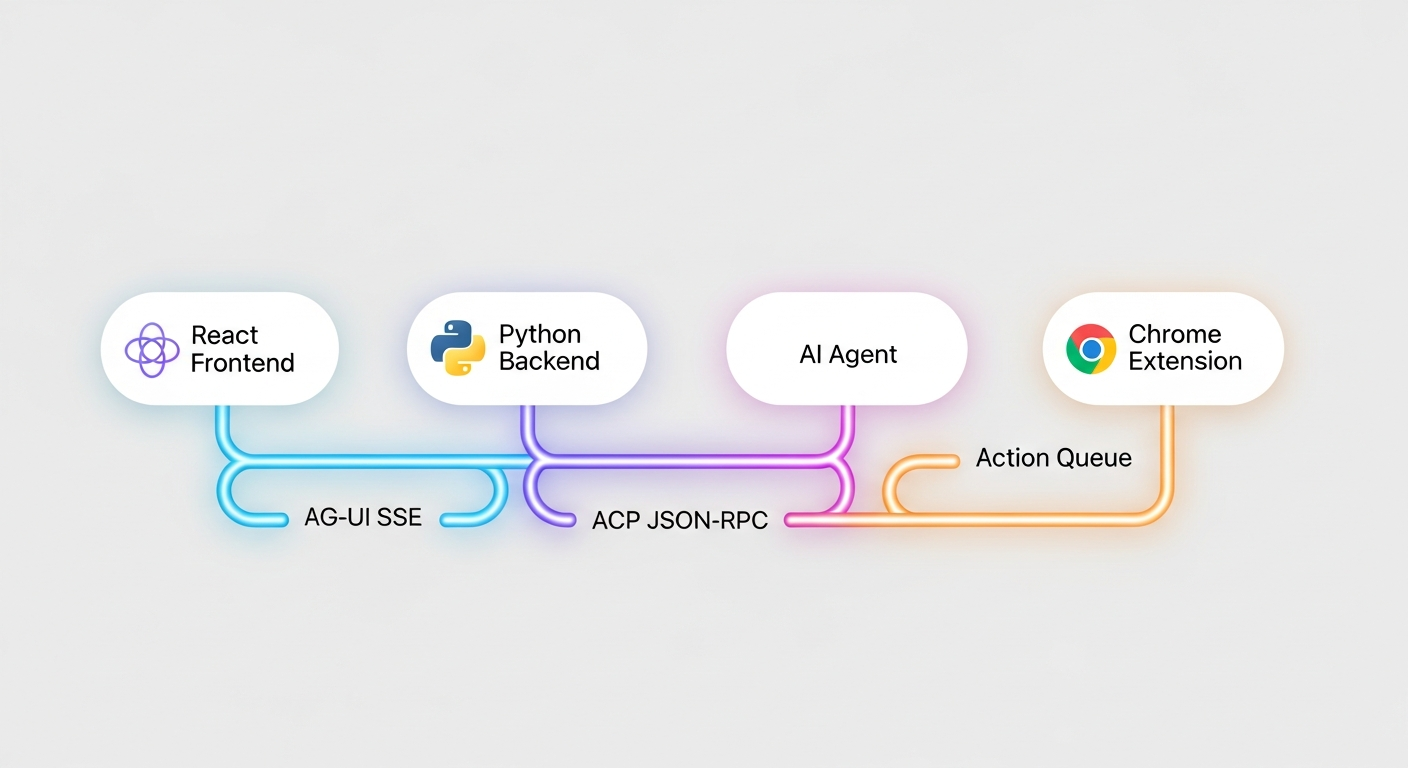

I recently built a browser-based AI coding assistant. React frontend, Python backend, Chrome extension, real-time streaming, tool approval system, the works. It talks to an AI agent over one protocol, streams results to the UI over another, and dispatches browser actions to a Chrome extension over a third.

Three protocols. Three boundaries. And I'm convinced those boundaries are the single best architectural decision in the entire project.

Here's the thing nobody tells you when you're learning to code: the protocols between your systems matter more than the systems themselves. The code inside each box is replaceable. The contracts between the boxes are what hold everything together.

What I Mean by "Protocol"

I'm not talking about HTTP or TCP. I mean something simpler: a defined agreement about how two pieces of software communicate. What messages can be sent. What responses are expected. What happens when something goes wrong.

In my project, there are three of these:

ACP (Agent Client Protocol) sits between my backend and the AI agent. It's JSON-RPC 2.0 over stdin/stdout. I send prompts, the agent streams back text, tool calls, and permission requests.

AG-UI sits between my backend and the React frontend. It's Server-Sent Events carrying a defined set of event types: run started, text message content, tool call started, and so on.

A browser action queue sits between my backend and the Chrome extension. Actions go in, results come back, futures get resolved.

Three protocols. Three clean seams in the architecture. And every single benefit I'm about to describe flows from those seams.

Three protocols, three clean seams: AG-UI between frontend and backend, ACP between backend and agent, and an action queue to the browser extension.

Three protocols, three clean seams: AG-UI between frontend and backend, ACP between backend and agent, and an action queue to the browser extension.

Protocols Are Boundaries of Ignorance

This is the key insight, and it sounds almost too simple: a protocol lets each side not know about the other.

My React frontend has no idea that kiro-cli exists. It doesn't know the agent runs as a subprocess. It doesn't know about JSON-RPC or stdin/stdout. It connects to an SSE endpoint, receives AG-UI events, and renders them. That's it.

My AI agent has no idea there's a browser involved. It discovers tools during session creation, calls them by name, and gets results back. Whether those tools are executed by a Chrome extension, a Puppeteer instance, or a trained monkey with a keyboard is completely invisible to it.

This ignorance is not a bug. It's the entire point.

When I rewrote the frontend event handling, I didn't touch the backend. When I changed how browser tools work, I didn't touch the chat system. When the AI agent's behavior changed between versions, the frontend didn't notice because the protocol stayed stable.

Each protocol is a firewall against complexity. Cross it, and you enter a different world with different rules. That's liberating.

Why This Matters for Vibe-Coding

If you're building fast with AI assistance, you're generating a lot of code quickly. And code generated quickly has a tendency to become a tangled ball of dependencies if you're not careful.

Protocols are the antidote.

When I started this project, the first thing I defined was the event types. What does a TOOL_CALL_START event look like? What fields does a STATE_UPDATE carry? I wrote maybe twenty lines of type definitions. It took fifteen minutes.

Those fifteen minutes saved me weeks. Every time I asked the AI to help build a component, I could say "this component receives AG-UI events with this shape" and the generated code was automatically scoped. It couldn't reach into the backend. It couldn't make assumptions about the agent. The protocol was the constraint, and constraints are what make AI-generated code actually usable.

Without protocols, vibe-coding produces spaghetti. With protocols, it produces modules. Same AI, same speed, wildly different outcomes.

Why This Matters for Applied AI

Here's where it gets genuinely important.

AI agents are unpredictable. You do not control what an LLM decides to do. It might try to delete your home directory. It might try to make network requests you didn't expect. It might hallucinate a tool that doesn't exist and call it with confidence.

Protocols are how you tame that chaos.

In ACP, when an agent wants to do something potentially dangerous, it sends a session/request_permission message. This is a request, not a notification. The agent literally cannot proceed until your code responds. The protocol enforces the gate. Not the agent's good behavior, not a prompt asking it to be careful, not a hope and a prayer. The wire format itself requires a response before execution continues.

My backend has a policy engine that evaluates every tool call. Filesystem operation? Flag it. Shell command? Flag it. Network request? Flag it. The engine is forty lines of code. It works because the protocol guarantees it sees every tool call before execution. No tool call can sneak past, because the protocol doesn't have a path for that.

This is the difference between "we asked the AI to be safe" and "the architecture makes unsafe actions impossible without human approval." Protocols give you the second one.

Composability: The Prize at the End

When your systems talk through protocols, you get composability for free.

MCP (Model Context Protocol) means any tool server can plug into any agent. I can add browser tools, database tools, or Slack tools just by declaring them in a config file. The agent discovers them at session start and calls them by name.

ACP means any client can talk to any agent. My Python backend could talk to kiro-cli today and a different agent tomorrow. The backend doesn't care. The protocol is the interface.

AG-UI means any frontend can consume the event stream. A React app, a terminal client, a mobile app. Same events, different renderers.

I have a bridge component that translates between ACP and AG-UI in real time. It's 250 lines. It tracks open messages, manages tool call state, buffers notifications that arrive before a run starts, and closes everything cleanly when the agent's turn ends. It's the most complex piece of protocol machinery in the project, and it's 250 lines. That's only possible because protocols did the hard work of defining what goes where.

The Takeaway

Protocols are not glamorous. Nobody's writing breathless blog posts about JSON-RPC message schemas. But every system I've built that aged well had clean protocol boundaries, and every system that turned into a maintenance nightmare didn't.

If you're building with AI, this matters double. AI makes code cheap to produce and expensive to reason about. Protocols are how you keep the reasoning tractable. They give you walls you can lean on, interfaces you can test against, and boundaries that hold even when the code on either side is changing fast.

Define your protocols first. Make them simple. Make them explicit. Then let everything else be messy, because the boundaries will save you.

The code inside each box is temporary. The contracts between the boxes are architecture.

This is the first of two articles drawn from building a browser-based AI coding assistant. The second goes deep on ACP, the protocol that lets AI agents and developer tools speak a common language.